Most AI demos are impressive for about five minutes. An agent answers a question, writes some code, and maybe calls an API. Then you realize it can only respond when prompted; it does not actually do anything on its own.

With OpenClaw, we wanted something different.

We wanted a system that wakes up, makes decisions, executes tasks, reacts to what happens, and loops back on itself without anyone telling it to start. Instead of a reactive demo, OpenClaw was designed to behave like an operational engine. It should plan, act, evaluate, and iterate continuously.

Essentially, we wanted to see if we could run a real company using AI agents powered by OpenClaw, operating end-to-end without human intervention.

This post is the full technical breakdown of how we built it: the architecture, the stack, the agent roles, the mistakes we made, and what it actually looks like when it runs. We built it on top of OpenClaw for agent orchestration, Vercel as the control plane, and Supabase as the persistent state layer. The core closed-loop system took about a week to get working. The rest was hardening it against the ways it tried to break itself.

🎧 Listen to the blog:

First, What Is OpenClaw?

If you have not encountered it yet, OpenClaw is an open-source AI agent framework that surpassed 145,000 GitHub stars in early 2026, making it one of the fastest-growing developer projects in recent memory. Originally released as Clawdbot by developer Peter Steinberger, it is not a chatbot. It is a local gateway process that connects to messaging platforms and routes incoming signals through an LLM-powered agent that can take real actions, not just respond in text.

The key architectural insight OpenClaw embodies is the separation between the orchestration layer and the model layer. The Gateway handles routing, sessions, connectivity, and cron scheduling. The Agent Runtime handles reasoning and execution. That separation is what makes it composable for building systems like ours; you can wire complex multi-agent logic into the orchestration layer without the model itself needing to manage coordination.

For our use case, we extended OpenClaw’s Gateway to serve as the trigger and routing layer, and built a custom mission execution engine on top of it. More on that below.

Why Build An Autonomous AI Company?

The shift happening in AI right now is from chatbots to operators. A chatbot responds when asked. An operator acts on its own; it identifies what needs doing, creates a plan, executes it, handles failures, and responds to what the execution produced. The difference sounds subtle until you actually build one, at which point it becomes obvious how much harder autonomous operation is than conversational AI.

We were not trying to replace humans entirely; we are realistic about that. What we wanted to test was: can a system of specialized AI agents run the day-to-day operations of a content and social media business without requiring a human to initiate each task? Research, strategy, content creation, publishing, quality control, and decision-making are all triggered, executed, and completed by agents reacting to each other and to external signals.

The answer, after two weeks of building and a lot of debugging: mostly yes. The system runs unattended for long stretches. Humans are still in the loop for exception handling and strategic direction. But the operational layer, the grinding daily execution, runs on its own.

Our AI Tech Stack Explained: OpenClaw + Vercel + Supabase

OpenClaw, The Agent Orchestration Engine

OpenClaw acts as the brain and execution layer. Its Gateway runs as a persistent process on our VPS, handling incoming events, routing signals to the appropriate agents, and managing the cron scheduler that drives time-based triggers. We use its multi-agent routing capability to isolate each of our six specialist agents into separate workspaces, with defined communication channels between them.

What makes OpenClaw particularly useful for a system like ours is that it treats tool use as first-class. Agents can run shell commands, call APIs, write and execute code, and interact with external services, all within the same orchestration layer that handles their conversational logic. That means an agent can decide to do something and do it, without a separate execution pipeline.

Vercel, The Control Plane for AI Automation

We deliberately separated execution from control. Vercel handles the control plane: API routes that evaluate trigger conditions, route proposals through approval logic, and manage the policy checks that govern what agents are allowed to do and when. Serverless functions on Vercel are ideal for this because trigger evaluation needs to be fast, stateless, and independently scalable; it should not share runtime with the heavy task execution happening on the VPS.

The mental model: Vercel decides what should happen. The VPS actually makes it happen. If the VPS goes down, Vercel keeps evaluating triggers and queuing proposals. When the VPS comes back up, it picks up the queue and executes. That decoupling makes the system significantly more resilient than a monolithic architecture where orchestration and execution run in the same process.

Supabase, The AI Agent Memory and State Layer

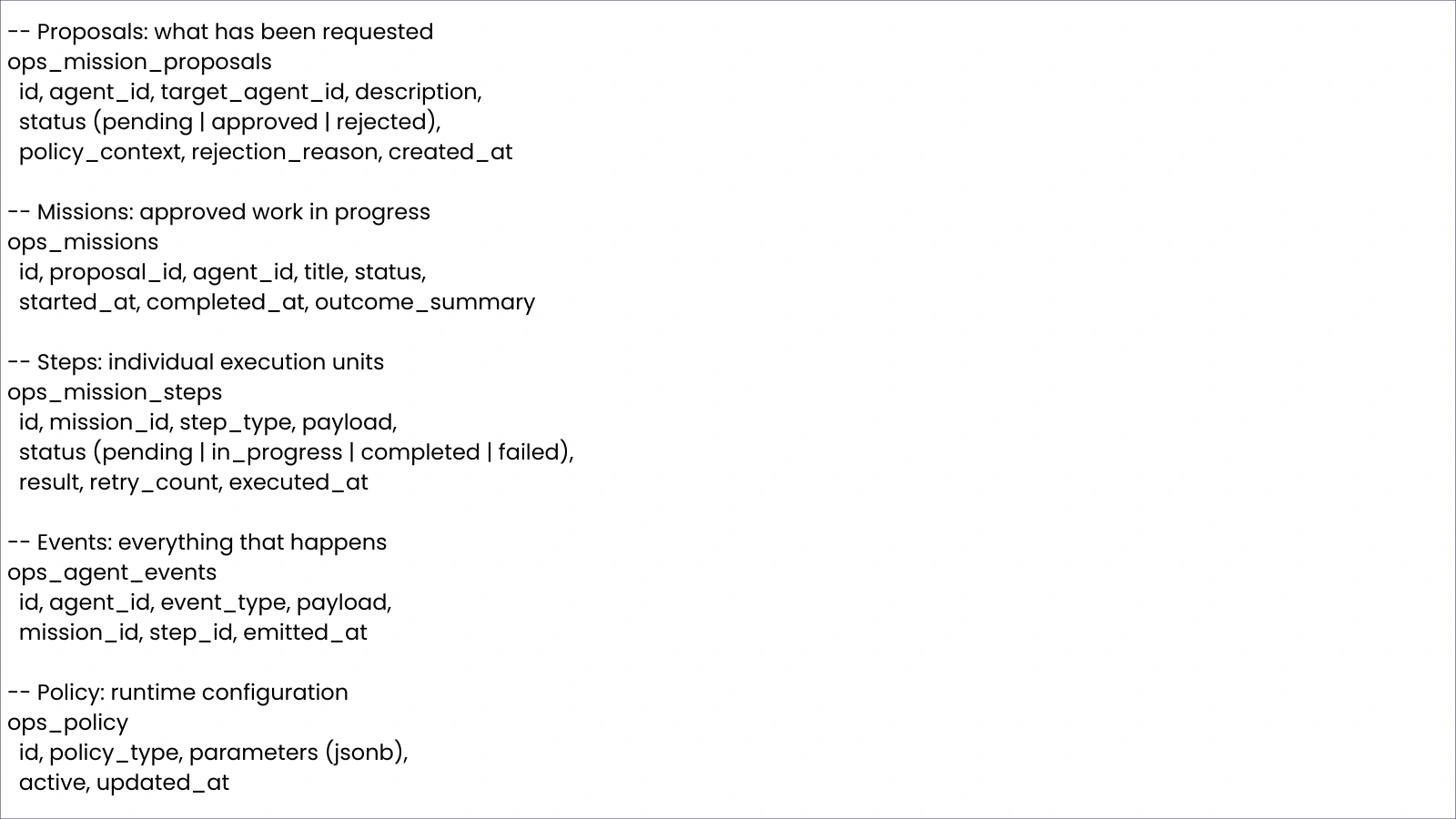

Supabase is the single source of truth for everything in the system. Every proposal, mission, step, event, and policy record lives in Supabase. Agents do not hold state locally; they read from and write to the database on every operation. This is critical for autonomous systems: if any component restarts, it can reconstruct exactly where it was by reading the current state from the database rather than relying on an in-memory context that may have been lost.

The core tables are: ops_mission_proposals (what has been requested), ops_missions (approved work in progress), ops_mission_steps (individual execution units within a mission), ops_agent_events (everything that happens, emitted as a log), and ops_policy (runtime configuration for quotas, caps, and behavioral rules). The event log, in particular, is what makes the system debuggable. When something goes wrong, you can trace exactly what each agent did and when.

Also Read:

How to Set Up Professional OpenClaw Implementation by Globussoft AI

OpenClaw Enterprise: How We Deployed It for 500 Employees

The Closed-Loop AI Agent Architecture: From Proposal to Execution

The core innovation of our system is not any individual component; it is the loop. Here is how a single piece of work flows through the system end-to-end:

Proposal Creation: An agent or a trigger identifies something that needs doing and creates a proposal record in ops_mission_proposals. A proposal contains the work description, the requesting agent, the target agent, and the policy context that applies.

Auto-Approval and Policy Checks: The Vercel control plane evaluates the proposal against active policies, quota checks, cap gates, and deployment rules. If the proposal passes all checks, it is automatically approved. If it fails, it is rejected immediately with a reason, so the queue does not fill with work that can never be executed.

Mission and Step Generation: An approved proposal triggers mission creation in ops_missions. The assigned agent breaks the mission into discrete steps stored in ops_mission_steps, each with a defined type that maps to a specific worker on the VPS.

VPS Worker Execution: The VPS polls for pending mission steps, maps each step type to its executor, and runs them sequentially or in parallel depending on dependencies. Workers handle their own retry logic and mark steps as completed or failed upon finishing.

Event Emission: Every meaningful action, step completed, step failed, mission finalized, quota threshold hit, emits an event to ops_agent_events. Events are the nervous system of the whole system.

Trigger and Reaction Re-Evaluation: The Vercel control plane watches the event stream. Certain events trigger reactions; a viral content event might trigger a new research proposal, a failure event might trigger a diagnostic mission, a quota threshold hit might pause a category of work. The reaction matrix is what makes the system feel alive rather than mechanical.

That loop is what transforms “agents that can talk” into “agents that can operate.” Once it is running, workflows run through the system continuously without human initiation.

Designing A Multi-Agent AI Company: Roles and Responsibilities

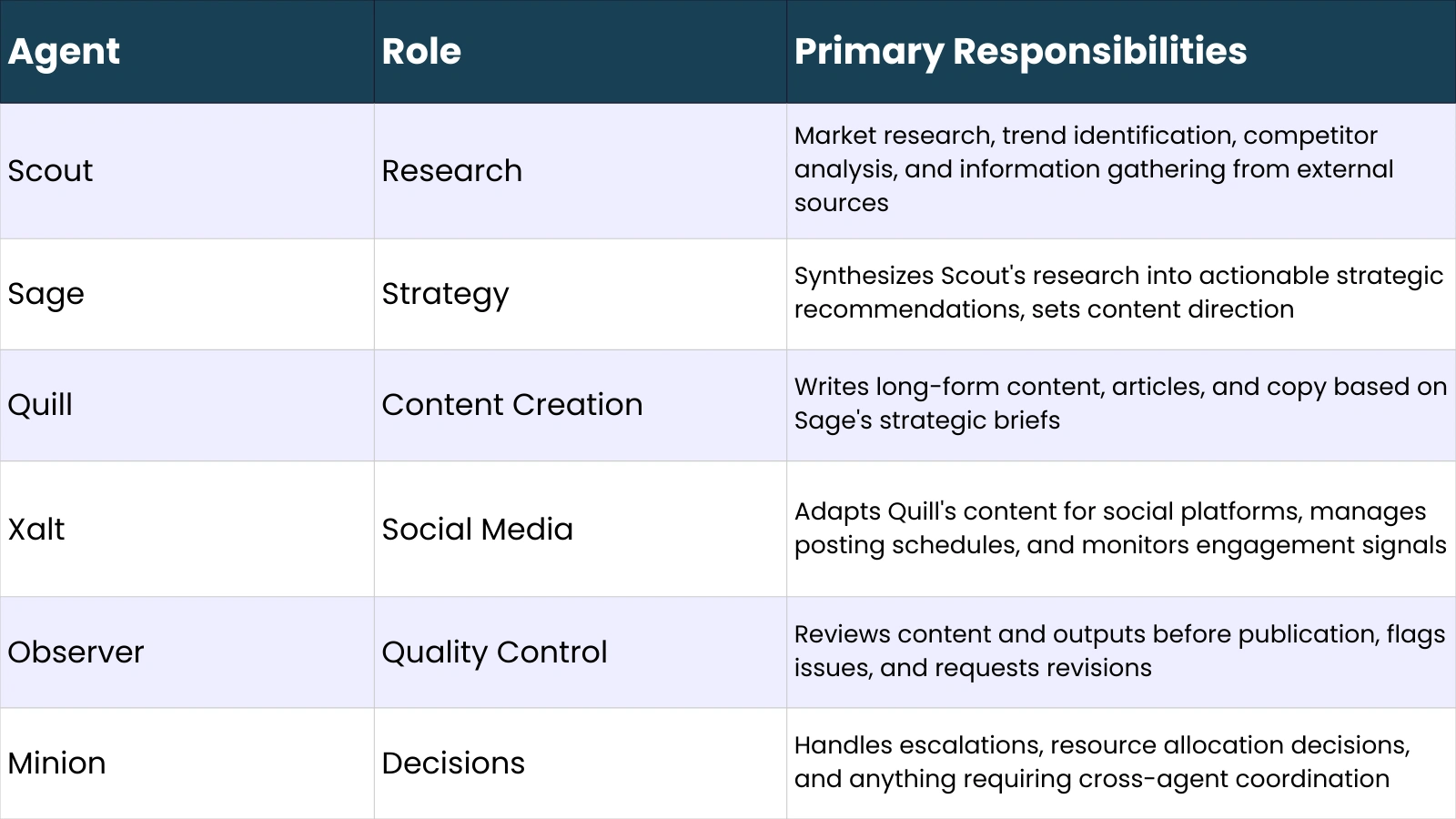

We created six specialized agents rather than one generalist agent for a specific reason: role separation dramatically improves output quality and system stability. A single agent trying to do research, strategy, content creation, publishing, quality control, and decision-making simultaneously produces mediocre output across all of them and creates unpredictable behavior when tasks conflict. Specialized agents with defined mandates are more reliable and easier to debug.

The agent names are intentional; they are memorable enough to reference quickly during debugging, which matters more than you might expect when you are reading event logs at midnight, trying to figure out why a mission stalled.

The 3 Biggest Mistakes We Made Building Autonomous AI Agents

Mistake #1, Dual Executors Causing Race Conditions

Early in development, we had both the VPS worker and a Vercel serverless function capable of picking up and executing mission steps. We thought redundancy would improve reliability. What it actually produced was race conditions; two executors would grab the same step simultaneously, both would mark it as in-progress, and both would try to finalize it. The result was duplicate outputs, corrupted mission state, and hours of debugging to understand why missions were completing with conflicting results.

The fix was a non-negotiable rule we now apply to every system we build: single execution authority. One component picks up and executes work. Everything else can be observed, triggered, and approved, but only one thing executes. We moved all execution exclusively to the VPS worker and made Vercel strictly the control plane. Race conditions disappeared immediately.

Mistake #2: Triggers That Created Dead-End Proposals

We had a trigger that fired when certain content performance thresholds were hit, creating a new research proposal. What we forgot was that the auto-approval logic had not been configured for proposals generated by that trigger path. The proposals were created, sat in the queue as pending, and the trigger kept firing, generating more proposals for the same threshold event, none of which were being approved or rejected.

The lesson: every proposal must have exactly one entry point into the approval flow. If a proposal can be created through a path that bypasses auto-approval evaluation, you will eventually accumulate a backlog of orphaned proposals that clog the queue and trigger false-positive monitoring alerts. We added a validation step that rejects any proposal that cannot be routed to a policy evaluator at creation time.

Mistake #3, Infinite Queue Growth from Quota Failures

We had quota limits on how many tweets Xalt could post per day. What we did not have was pre-approval quota checking; the system would approve a mission, generate all its steps, and then fail at the execution stage when the quota was already exhausted. The mission would sit as partially completed, new proposals would continue to be approved, and the queue would grow continuously with work that could never finish.

The principle we extracted: reject at the gate, not at execution. Every constraint, quota limits, cap gates, and dependency requirements, should be evaluated at the proposal approval stage. If a mission cannot be completed given the current system state, it should be rejected immediately with a clear reason, not admitted into the execution queue to fail partway through. This single change reduced our queue size by roughly 60% and eliminated an entire class of stuck-mission debugging sessions.

Building a Policy-Driven AI Governance System

One of the best decisions we made early was externalizing all behavioral rules into a policy table rather than hardcoding them. Every quota, cap, behavioral parameter, and approval rule lives in ops_policy as a runtime configuration record. Changing how many tweets Xalt posts per day does not require a code deployment; it requires updating a database record.

The policy engine evaluates three main categories of rules at proposal approval time:

- Cap gates: hard limits on how many missions of a given type can be active simultaneously. Prevents agents from spawning unbounded parallel work.

- Quota checks: time-windowed limits on specific action types, posts, API calls, and content items, evaluated against the event log for the current window.

- Deployment policies: rules about when certain types of work are allowed to run. We use these to prevent publishing activity during certain hours and to throttle activity when external API rate limits are approaching.

Runtime configuration beats hardcoding for autonomous systems for a simple reason: the system needs to be tunable without restarts. When an agent is behaving unexpectedly, you want to be able to dial down its activity immediately, not push a hotfix and redeploy.

Trigger Systems And The Reaction Matrix

Triggers are what make the system feel like it is paying attention to the world rather than just grinding through a fixed task list. We have three categories of triggers:

- Automation triggers: fire in response to external signals, a piece of content performing unusually well, a competitor posting something relevant, or an API returning an error. These create proposals for agents to respond to what just happened.

- Cron triggers: scheduled triggers that drive recurring work, daily research cycles, weekly strategy reviews, and content calendar maintenance. These run through OpenClaw’s built-in cron scheduler.

- Reaction triggers: fire in response to system events, a mission completing, a step failing, or a quota threshold being reached. These are how agents respond to each other’s outputs.

The reaction matrix defines what happens when specific events occur. It is not fully deterministic, and that is deliberate. A fully deterministic reaction matrix produces robotic behavior where the same input always produces the same output. We introduced probabilistic behavior: a viral content event triggers a follow-up research mission 80% of the time, and nothing 20% of the time. The system makes slightly different choices on different days, which produces more varied and natural-feeling output.

We also implemented cooldown logic to prevent trigger loops. Without cooldowns, a reaction trigger could produce an event that fires another reaction trigger, which produces another event, and so on. Cooldown windows define a minimum time between triggers of the same type from the same source, effectively breaking potential loops before they start.

Self-Healing AI Systems: Handling Failures Automatically

Autonomous systems will encounter failures. The question is not whether something will break, but whether the system can recover without human intervention every time it does.

We built three recovery mechanisms:

Stuck task detection: a background process scans ops_mission_steps for steps that have been in ‘in-progress’ status longer than a configurable threshold. If a step exceeds its allowed execution window without completing or failing, it is marked as failed, and the mission finalization logic runs. This handles VPS-level failures where a worker dies mid-execution without cleanly writing a failure status.

- Mission finalization logic: when all steps in a mission are either completed or failed, the finalization process runs. It calculates mission success/failure status, emits a finalization event, and clears the mission from the active queue. This runs whether the mission succeeded or failed, ensuring a clean queue state regardless of outcome.

- Failure event reactions: failed missions emit failure events that can trigger diagnostic proposals, essentially asking Sage or Minion to look at what went wrong and decide whether to retry, escalate, or abandon the work. Not every failure triggers a retry; the policy engine determines whether a retry is warranted based on failure type and retry history.

The principle underlying all three: the system must be able to reach a stable state from any failure mode without human intervention. If a human has to manually clean up stuck missions, manage queue state, or reset agent behavior after failures, the system is not truly autonomous; it is semi-automated with a human as the error handler.

Database Design For An AI Agent Company

The schema is the foundation on which everything else is built. Getting it wrong early creates cascading problems, agents reading stale state, finalization logic failing on unexpected null values, and vent logs becoming ambiguous. Here is the core structure we settled on after iteration:

A few design decisions worth noting: the policy parameters column is JSONB rather than a fixed schema, which means policy rules can have arbitrary structure without requiring schema migrations when a new policy type is added. The event log includes mission_id and step_id foreign keys, which makes it trivial to pull the full execution history of any mission from a single query. And every table has a created_at or emitted_at timestamp; auditable chronology is non-negotiable in a system where you will regularly need to reconstruct what happened and why.

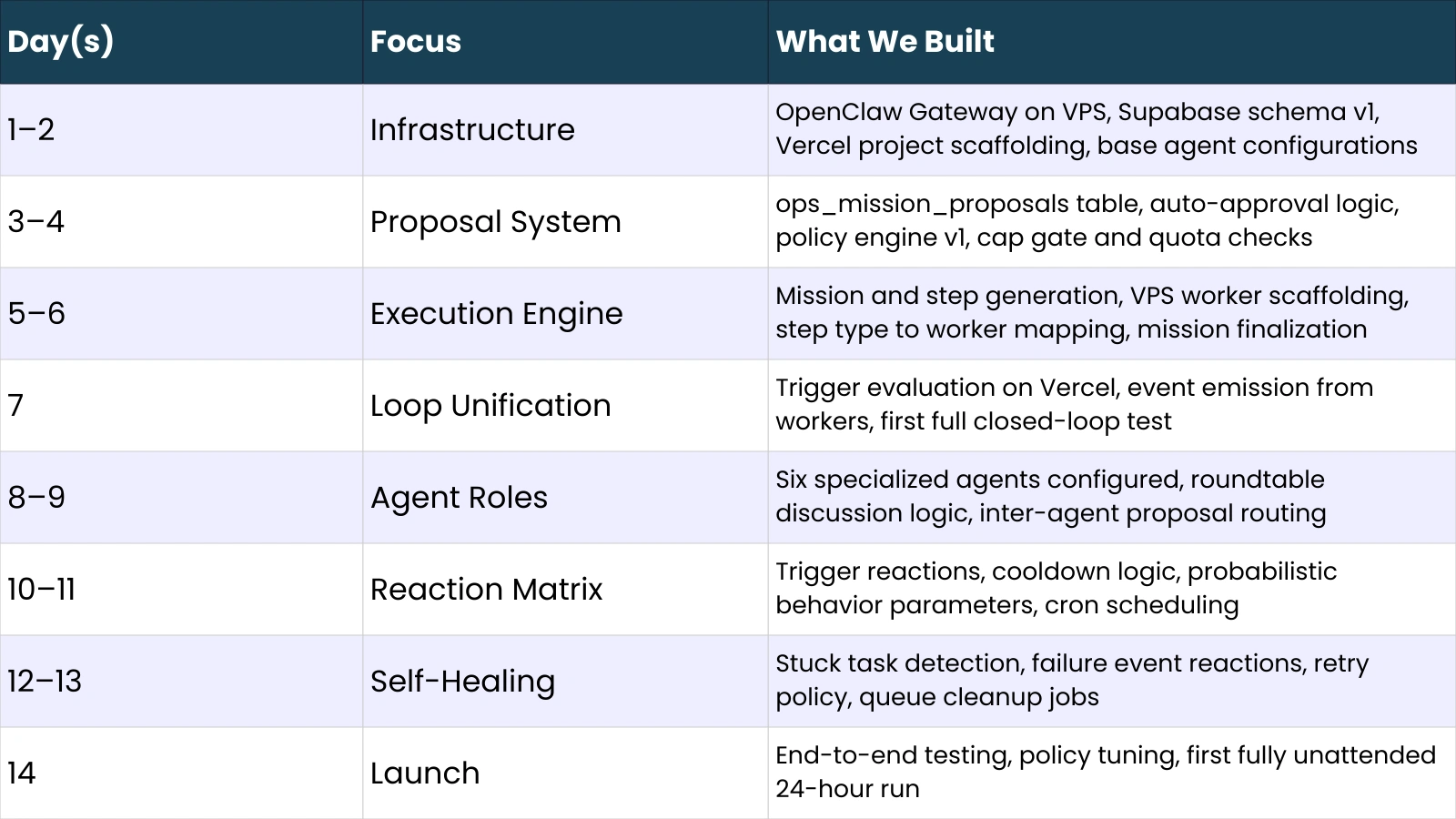

Timeline: How We Built An AI Company In Two Weeks

The core closed-loop in OpenClaw, from proposal to execution to event to reaction, was working by Day 7. Everything after that was reliability engineering: making sure the system could run for 24 hours without requiring intervention, handle unexpected failures gracefully, and produce consistent output quality across agent roles.

What It Actually Means To Run A Self-Operating AI Business

Here is an honest accounting of what runs unattended and what still needs humans:

- Fully unattended: daily research cycles, content drafting and scheduling, social media posting within quota limits, quality control review passes, performance monitoring, and trigger evaluation.

- Requires human oversight: strategic direction changes, exception handling for novel failure modes not covered by existing recovery logic, final approval on high-stakes content categories, and periodic policy tuning as external conditions change.

The ‘free will’ the system appears to have is simulated through the probabilistic reaction matrix and the policy engine’s runtime configurability. The agents are not making genuinely autonomous strategic decisions; they are executing well-defined roles with enough variability to avoid mechanical predictability. That is sufficient for operational autonomy. It is not the same as strategic intelligence.

What we are improving next: better inter-agent feedback loops so Observer’s quality assessments actually influence how Quill and Xalt approach similar tasks in future missions, deeper integration between Sage’s strategic output and Scout’s research targeting, and more granular failure classification so the recovery logic can make smarter retry decisions.

Key Lessons for Anyone Building AI Agent Infrastructure

- Separate execution from control. The component that decides what should happen and the component that makes it happen should be distinct, independently deployable, and failure-isolated from each other.

- Centralize proposal logic. All work requests should flow through a single proposal creation and approval path. Multiple entry points create orphaned proposals and policy gaps that are painful to debug.

- Reject at the gate. Every constraint, quota, dependencies should be evaluated at proposal approval time. Work that cannot be completed should never enter the execution queue.

- Everything must emit events. If something happens and it does not create an event record, it effectively did not happen from the system’s perspective. Comprehensive event emission is what makes autonomous systems debuggable and recoverable.

- Build recovery from day one. Stuck task detection, mission finalization logic, and failure reactions are not nice-to-haves. An autonomous system that requires human intervention to recover from failures is not autonomous; it is semi-automated.

- Externalize configuration. Quotas, caps, behavioral rules, and scheduling parameters should live in a policy table, not in code. You will change them frequently during early operation, and code deployments for configuration changes will slow you down significantly.

AI Development & Automation Services Powering Modern Businesses

OpenClaw builds and scales autonomous AI systems. Many companies rely on specialized AI development partners that provide infrastructure, agent design, and LLM integration services. Globussoft.ai helps transform experimental AI workflows into production-ready business automation systems, from intelligent agents to fully operational AI pipelines.

Capabilities

- AI Agent Development: Design and deploy autonomous agents to handle research, content, ops, and customer workflows.

- Custom LLM Chatbots: Build knowledge-trained chatbots for support, onboarding, and user engagement.

- Workflow Automation: Automate repetitive business processes using AI decision systems.

- Model Fine-Tuning: Improve response accuracy with domain-specific training.

- AI Stack Integration: Connect AI systems with existing apps, CRMs, and databases.

- Consulting & Strategy: Identify where AI can drive the most operational impact.

Is This the Future Of AI Startups?

What we built with OpenClaw is not a product; it is an infrastructure that could run a product. The distinction matters.

Building closed-loop AI agent systems with OpenClaw is still genuinely hard. It requires careful architecture, significant time spent on reliability engineering, and a willingness to debug failure modes that simply do not exist in simpler systems.

The technology behind OpenClaw is real, and the architectural patterns are proven, but the implementation complexity is not trivial.

What has changed is the accessibility of the components. OpenClaw gives you a production-grade agent gateway that would have taken months to build from scratch two years ago. Supabase gives you a persistent state layer with real-time subscriptions that makes event-driven architecture straightforward. Vercel gives you a serverless control plane that scales independently from your execution workers. The primitives for building autonomous AI operations are genuinely available to small teams now, not just to companies with large infrastructure engineering organizations.

The direction this is heading is away from SaaS tools toward AI employees, systems that hold a role, operate within a defined mandate, and execute that mandate continuously without requiring human initiation of each task. We are early in that transition. But the architecture that makes it possible is not theoretical. We have been running ours in production for several weeks, and the main thing we keep noticing is how much operational work it is quietly handling that we used to spend hours on ourselves.

Frequently Asked Questions

What is OpenClaw used for?

OpenClaw is an open-source AI agent framework that connects to messaging platforms and routes incoming signals through an LLM-powered agent capable of taking real-world actions, running commands, calling APIs, executing code, and managing scheduled tasks. It is model-agnostic (supporting Claude, GPT, Gemini, and local models) and operates as a persistent gateway process on your own hardware. Beyond personal assistant use cases, it serves as an orchestration layer for multi-agent systems like ours, where its Gateway handles routing, cron scheduling, and event management while specialized agents handle domain-specific work.

Can AI agents actually run a business?

Operationally, yes, with meaningful caveats. Our system handles research, content creation, quality review, and social media publishing without human intervention. What it cannot do is make genuine strategic decisions, handle novel situations outside its defined role boundaries, or recover from failure modes that its self-healing logic was not designed to address. The practical reality is that AI agents can run the execution layer of a business effectively; humans still need to provide strategic direction, handle exceptions, and tune the system’s behavioral parameters as conditions change.

How do you build a multi-agent system?

The core requirements are: a persistent orchestration layer that routes work between agents (we used OpenClaw’s Gateway), a centralized state store that all agents read from and write to (Supabase), a proposal and approval system that governs what work gets created and admitted to the execution queue, and an event system that lets agents react to each other’s outputs. Role separation, giving each agent a defined, narrow mandate rather than one generalist agent, is the most impactful structural decision you can make for stability and output quality.

What database is best for AI agents?

Supabase works well for most multi-agent systems because it combines a relational database (useful for structured state like missions and proposals), JSONB support (useful for flexible policy parameters and event payloads), real-time subscriptions (useful for trigger evaluation that needs to react to new events), and a straightforward API surface that agents can interact with directly. For very high-throughput systems, a dedicated queue like Redis may be needed alongside the relational state store, but for the scale we are operating at, Supabase handles both functions adequately.

Is Vercel good for AI backends?

Vercel is excellent for the control plane of an AI agent system; trigger evaluation, policy checks, proposal routing, and approval logic are all well-suited to serverless functions that need to be fast, stateless, and independently scalable. It is not the right execution environment for long-running or compute-intensive agent tasks, which is why we run those on a persistent VPS. The architecture pattern we recommend: use Vercel for orchestration and decision logic, use a VPS or dedicated compute for actual task execution. Separating these two layers by compute environment enforces the architectural discipline of keeping control and execution separate.