Self learning AI just shifted the AI world. And if you haven’t heard about OpenClaw + Skills yet, you need to pay attention now.

We’re not talking about a minor update.

We’re talking about a new way AI systems grow, adapt, and operate, without waiting for engineers to retrain them from scratch.

This is the promise of self learning AI, and OpenClaw is making it real.

Listen To The Podcast Now!

What Is OpenClaw + Skills: And Why Does It Matter?

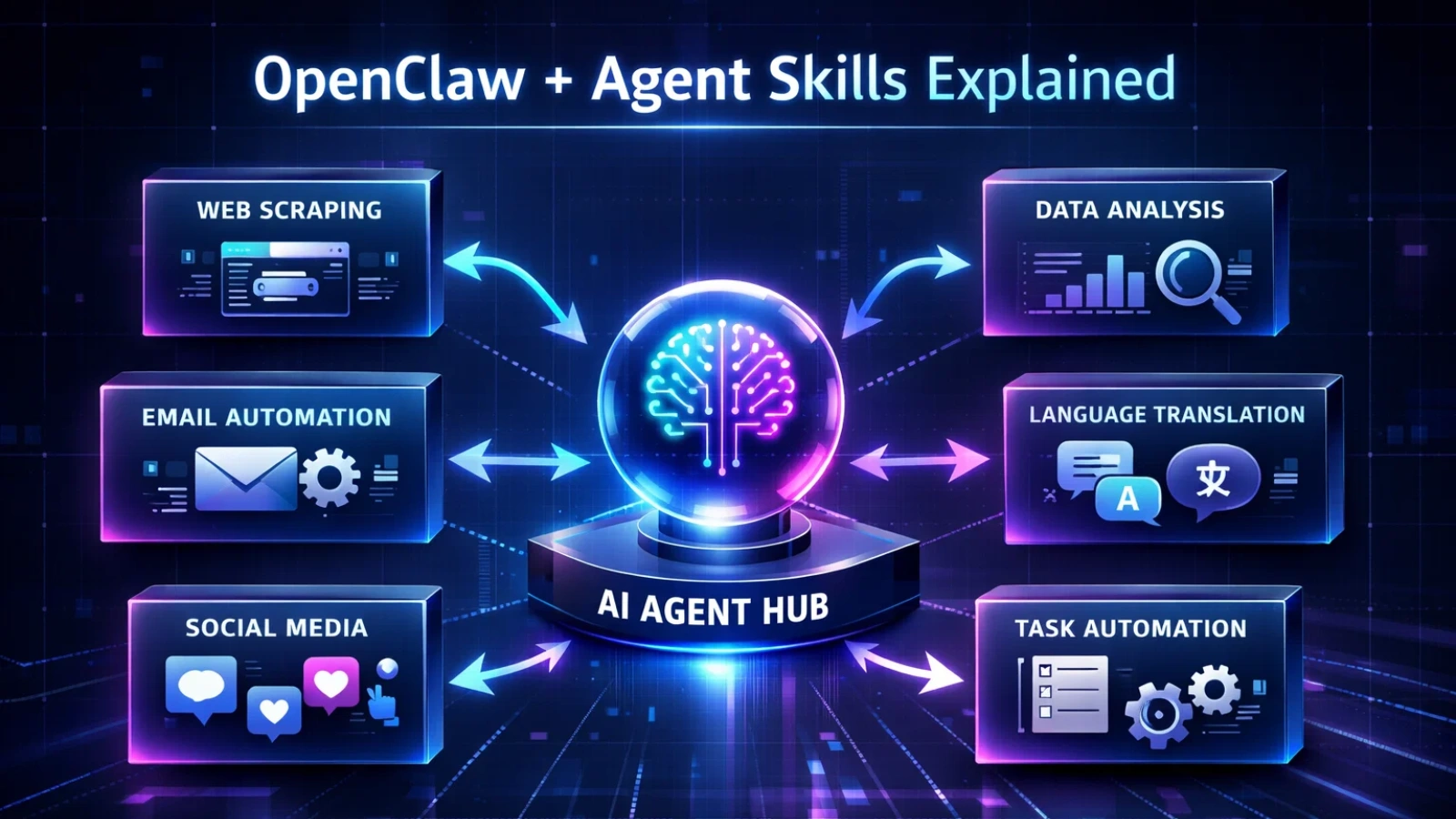

OpenClaw is an AI agent architecture that expands its capabilities through structured Agent Skills. Instead of relying solely on full model retraining, the system allows agents to load packaged expertise, including instructions, workflows, and supporting resources, whenever new tasks arise.

Think of each skill as a portable expertise module. The agent discovers it, understands the context, and begins performing tasks it previously could not handle.

Here’s what makes this approach powerful:

- Agents run persistently; they don’t stop and restart between tasks

- They evolve by loading new skills instead of requiring expensive retraining cycles

- Teams across roles, legal, development, finance, and more, can extend agent capabilities in a structured way

- Organizational knowledge can be captured in reusable, version-controlled formats

This is self learning AI in a practical, deployable form. Instead of waiting for data scientists to retrain models from scratch, the agent can continuously grow by incorporating new skills as needs evolve.

How This Is Different from Traditional AI Training

Traditional AI follows a simple but expensive path. You pre-train on massive data, fine-tune on specific tasks, then deploy. After that, the model is largely frozen.

Want new behavior? You typically go back to the beginning, retrain, relabel, and spend months plus a significant budget.

OpenClaw breaks that cycle.

A self learning AI model built on this architecture can expand its capabilities by loading structured Agent Skills on demand. The underlying math of large language models stays mostly the same. What changes is the learning and capability loop.

Instead of retraining the entire model, teams package expertise into reusable skills. The agent discovers the skill, loads the context, and applies it to the task.

This shifts the core question from “how do we retrain?” to “how effectively can we package and deliver expertise?” That’s a faster, more modular, and often far more cost-efficient problem to solve.

What Agent Skills Actually Enable?

To understand the real impact of ai self learning systems, you have to look at what skills unlock in practice.

- Domain expertise on demand

Teams can package specialized knowledge, from legal review workflows to financial analysis, into reusable skill modules. - New executable capabilities

Skills can give agents the ability to perform concrete actions like creating presentations, analyzing datasets, or building automation pipelines. - Repeatable, auditable workflows

Multi-step processes can be standardized into consistent workflows, improving reliability and compliance. - Cross-platform interoperability

Because the format is open and widely adopted, the same skill can work across multiple compatible agent environments.

This is why the ecosystem is paying attention. Skills don’t just make agents smarter. They make expertise portable.

Can Self-Learning AI Agents Match Fine-Tuned Models?

This is the big debate right now.

Fine-tuned models still lead in raw precision. They’re trained on carefully curated datasets, rigorously tested, and benchmarked. For regulated industries, they remain the safer and more predictable option.

But here’s the tension: a vertical AI startup might spend six months fine-tuning a model for contract law. Meanwhile, a domain expert using OpenClaw can package legal expertise into structured Agent Skills and iterate rapidly.

Speed vs depth. Agility vs accuracy.

The honest answer? Neither approach wins outright yet. The most likely near-term outcome is a hybrid model: fine-tuning for stability and core intelligence, paired with a self learning AI agent layer that enables continuous adaptation and fast capability expansion.

The strongest teams won’t choose one path. They’ll combine both.

Consumer Experiment or Enterprise Shift?

Right now, OpenClaw-style agents are mostly being explored by developers and early adopters. That’s normal. New paradigms always start at the edges.

But enterprise adoption is coming, and faster than most expect.

Projects like Hyperagent (from Airtable’s Howie Liu) are already betting that self learning AI will move from consumer playgrounds into serious enterprise workflows. And it makes sense. Enterprises are drowning in repetitive processes. They need automation that adapts. Static copilots are no longer enough.

The horizontal AI platforms: the Gleans, the ServiceNows, the Sierras of the world, will need to evolve. A static assistant that can’t grow is quickly becoming a liability.

The shift from “copilot” to “adaptive agent” isn’t a feature update. It’s an architectural rethink.

What This Means for Vertical AI Industries

Here’s where it gets really interesting, and a little disruptive.

Legal, finance, and healthcare have been fertile ground for self learning AI applications. These are domains with dense, specialized knowledge. They need AI that goes deep, not wide.

Could we see an “OpenClaw for Legal”: a self learning AI agent trained on a contract drafting SKILL file that rivals what top AI startups deliver today? It’s not far-fetched.

But vertical AI isn’t simple. A few real challenges remain:

- Reliability: Can a skill-file-trained agent match the consistency of a fine-tuned model?

- Compliance: in regulated industries, “good enough” isn’t good enough

- Domain depth: Some expertise is hard to codify in a markdown file

Still, the pressure on vertical AI startups is real. When building AI capability becomes faster and more modular, the moats get thinner. The startups that adapt, figuring out how to combine fine-tuning depth with agent-driven speed, will thrive. The ones that don’t will get lapped.

Read More:

How OpenClaw AI Integration Helps Businesses Earn $10K+ in 7 Hours?

How an OpenClaw AI Agent Can Automate Workflows from a $5 Server?

Will OpenClaw Change AI Economics?

This might be the most underrated part of the story.

Post-training AI is expensive. Human feedback, data labeling, RLHF pipelines: these costs add up fast. Companies like Mercor, Surge, and others have built businesses around this reality.

If self learning AI reduces the need for heavy retraining cycles, the economics of AI development shift dramatically. Pricing becomes more sustainable. Development timelines compress. Smaller teams can build more capable systems.

This doesn’t mean the labeling and data companies disappear. But it does mean they’ll need to adapt. The demand for raw labeling may shrink. The demand for high-quality, domain-specific skill documentation may grow.

It’s not a collapse. It’s a reallocation.

How Globussoft AI Helps You Build Adaptive AI Systems?

For many businesses, getting OpenClaw running is only step one. The real impact comes from building AI workflows that are reliable, scalable, and aligned with business goals.

This is where Globussoft.ai adds serious value.

While the OpenClaw AI agent provides the open-source automation engine, Globussoft AI helps organizations design and deploy production-ready AI systems that actually perform in real-world environments.

Key Capabilities That Accelerate OpenClaw Deployments

AI Agent Development

Design and deploy intelligent AI agents that streamline customer support and internal operations. These agents handle repetitive tasks, reduce manual errors, and deliver fast, personalized interactions.

LLM and Knowledge Base–Powered Chatbots

Build context-aware chatbots that combine large language models with your internal knowledge base for accurate, business-specific responses across customer and employee touchpoints.

LLM Testing & Fine-Tuning

Optimize model performance, reduce hallucinations, and improve response accuracy through structured evaluation and fine-tuning workflows.

AI/ML Pipeline Replication

Recreate and standardize successful AI pipelines so teams can scale automation consistently across departments and environments.

AI/ML Consulting

Get strategic guidance on architecture, model selection, cost optimization, and long-term AI adoption planning.

AI/ML Integration

Integrate OpenClaw agents seamlessly with existing business tools, CRMs, databases, and communication platforms.

In simple terms, OpenClaw provides the automation backbone, and Globussoft AI helps turn it into a production-grade business system.

For teams that want faster deployment, fewer configuration mistakes, and automation that actually scales, expert support can dramatically shorten the path from experiment to real operational impact.

Final Takeaway

OpenClaw + Skills doesn’t rewrite the fundamental math of AI. But it meaningfully changes what happens after deployment, and that’s where most of the real-world value lives.

The self learning AI model paradigm is still maturing. Skill files won’t replace years of fine-tuning overnight. But they’re a powerful new tool. And the teams that learn to use them well, combining structured training with continuous agent learning, will have a serious edge.

The question isn’t whether ai self learning systems will become mainstream. It’s how fast, and who gets there first.

Experiment early. Combine approaches wisely. Focus on reliability over hype.

That’s how you win in the agent era.

Frequently Asked Questions

- What exactly goes inside an Agent Skill package?

An Agent Skill typically includes structured instructions, workflow logic, scripts or tools, and supporting context. Together, these components help the agent understand not just what to do, but how to execute tasks reliably. - Do Agent Skills require coding knowledge to create?

Not always. While advanced skills may include scripts, many can be authored by domain experts using structured instructions and clear workflow steps. This opens the door for non-developers to contribute expertise. - How do agents decide which skill to use for a task?

Skill-compatible agents use task understanding, context matching, and retrieval mechanisms to discover relevant skills. The agent then loads the appropriate capability package when it detects a matching need. - Are Agent Skills secure for enterprise environments?

They can be, when properly governed. Enterprises typically implement version control, access permissions, validation layers, and audit trails to ensure skills are safe, compliant, and trustworthy. - What is the biggest limitation of the Agent Skills approach today?

The main challenge is consistency at scale. Packaging expertise well requires careful structure, testing, and iteration. Poorly designed skills can lead to uneven performance, which is why strong skill authoring practices matter.